Amazon will spend $200 billion on infrastructure this year. Google, between $175 and $185 billion. Meta, up to $135 billion. Microsoft, on pace for $145 billion.

Combined, the four largest cloud providers are investing close to $700 billion in 2026. Nearly double what they spent in 2025. Goldman Sachs projects $1.15 trillion in cumulative cloud spending from 2025 through 2027, more than double the $477 billion these companies spent in the previous three-year period.

For two consecutive years, Wall Street’s consensus estimates for this spending turned out to be too low.

What $700 billion actually buys

This is not a software investment. Roughly 75% of the spending goes directly toward AI-related infrastructure: data centers, specialized chips, networking equipment, and the physical plants that house them. Concrete, fiber, cooling systems, and power plants.

The scale has no recent precedent. The closest comparison is the telecom buildout of the late 1990s and early 2000s, when carriers laid more fiber-optic cable in three years than in the previous century combined. That buildout reshaped how every business communicated. The current one is reshaping how every business computes.

The energy wall

The binding constraint is not capital. It is electricity.

US data centers currently consume approximately 4.4% of the nation’s total power. The Department of Energy projects that figure could reach 12% by 2028. A single AI server draws 40 to 100 kilowatts, roughly ten times what a traditional server requires.

The grid cannot keep up. Approximately 70% of US power infrastructure is approaching end of life. PJM Interconnection, the organization managing the largest US electric grid, projects a 49 gigawatt generation shortfall by 2028. In Virginia, the most data-center-dense region in the world, grid connection requests now take four to seven years.

Ireland has imposed a cap on new data center grid connections in the Dublin region. The question is not whether energy will constrain AI expansion, but where and how severely.

What this means for every other company

If your company uses any cloud-based AI tool, your capability sits on infrastructure these four companies are building. The models you run, the speed you get, the price you pay - all of it flows from decisions made in boardrooms you will never enter.

Three planning assumptions deserve scrutiny:

Pricing will not stay where it is. The $700 billion is an investment seeking returns. Introductory and subsidized rates for AI services will adjust as providers pursue profitability on this unprecedented capital outlay.

Capacity is not guaranteed. Demand for advanced AI compute already exceeds supply. Energy constraints will tighten this further. Companies that assume they can scale their AI usage linearly may find themselves waiting in line.

Vendor concentration is a strategic risk. Four companies control the floor your AI strategy stands on. That concentration creates dependency, and dependency creates vulnerability when any of these providers shifts priorities, adjusts pricing tiers, or faces its own infrastructure bottlenecks. And infrastructure is only half the equation - when the people who built these models start leaving, the roadmap shifts regardless of how much hardware sits underneath it. Meta is already putting this infrastructure to work internally, with AI tools that are reshaping how the CEO himself accesses information.

The assumption worth questioning

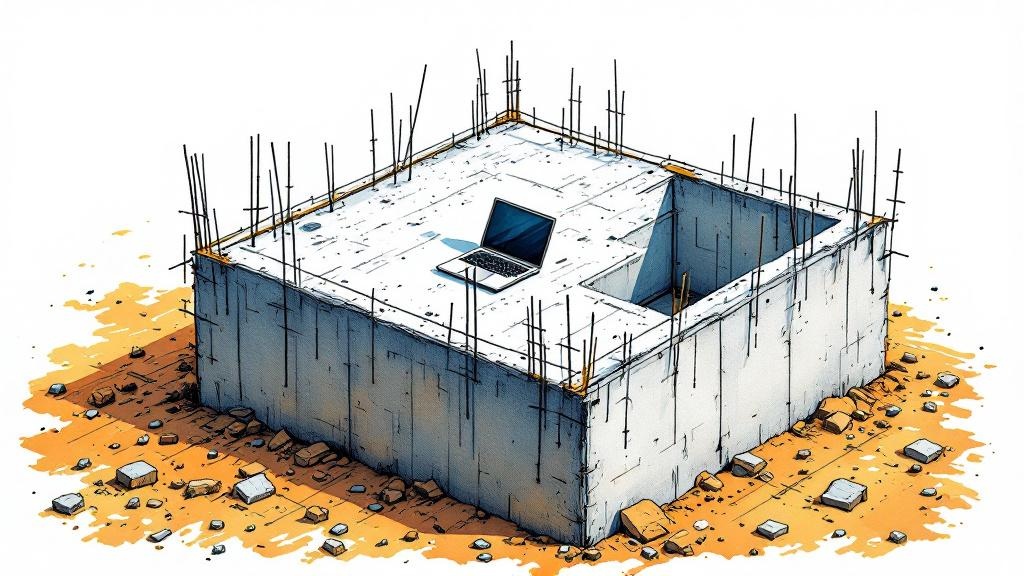

Most AI roadmaps treat infrastructure as a stable given: the compute will be there, the models will keep improving, the pricing will stay competitive. The $700 billion being poured into the ground right now suggests the providers themselves are not so sure. They are racing to build capacity because they believe demand will outstrip supply for years.

Your AI roadmap assumes stable ground. The ground under it is under construction.

When compute is this scarce, even OpenAI has to make hard choices about what to run. The Sora shutdown proved that - 9.6 million users were not enough to justify the GPUs.

Related: Oracle and Meta Are Cutting Tens of Thousands of Jobs shows the second-order effect of this infrastructure race - when the bills come due, the workforce pays first.