The Enterprise AI Model Market Reshuffled in 24 Months. Here's How to Build a Decision Framework That Doesn't.

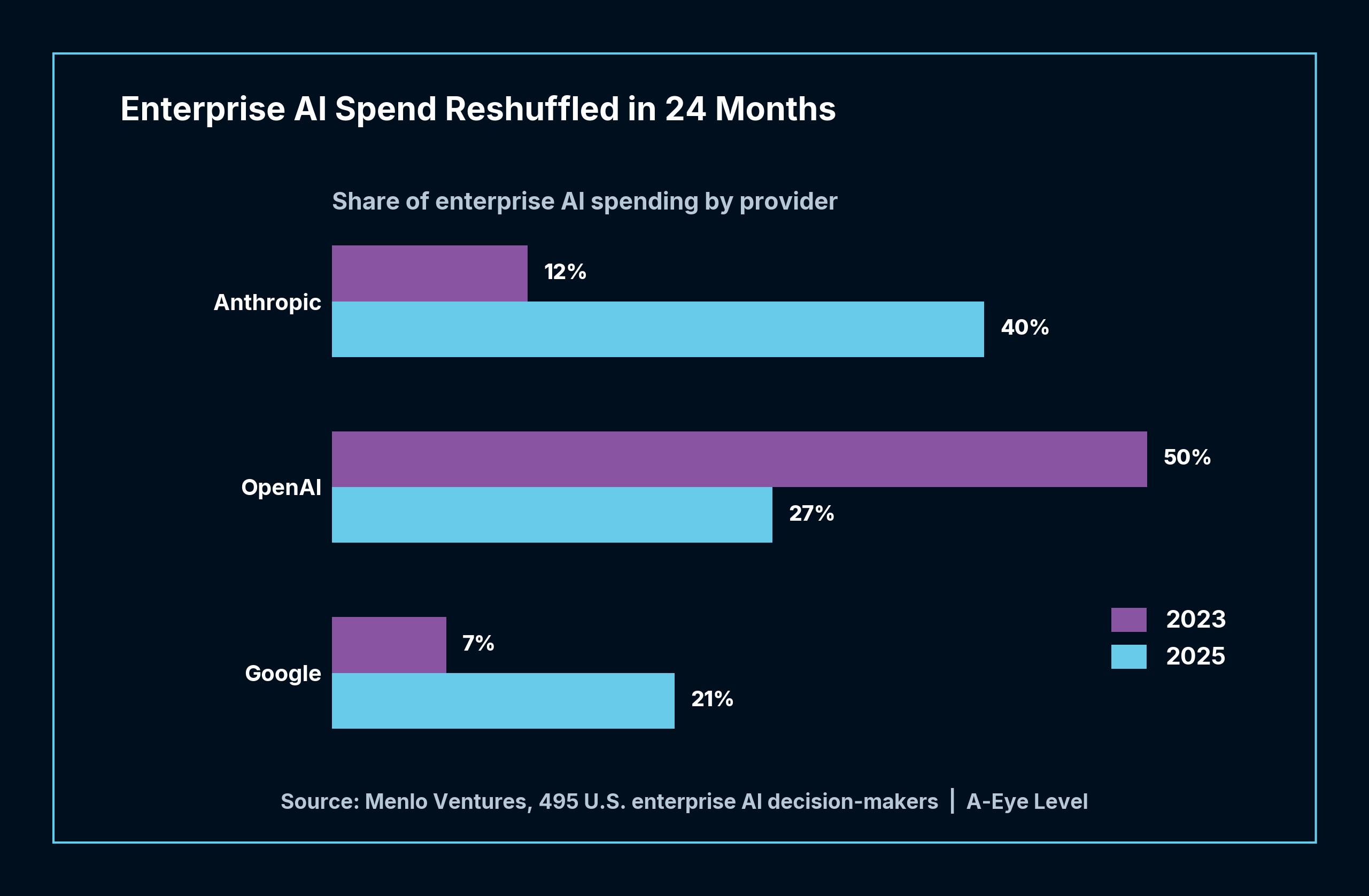

Anthropic’s share of enterprise AI spend went from 12% to 40% between 2023 and 2025. OpenAI dropped from 50% to 27%. Google climbed from 7% to 21%. According to Menlo Ventures’ survey of 495 U.S. enterprise AI decision-makers, the “best model” changed three times in 24 months, and total enterprise AI investment tripled from $11.5 billion to $37 billion in a single year.

Most companies are still choosing their AI model the same way they choose enterprise software - features, pricing, a vendor demo, a procurement cycle. That logic made sense when the market was concentrated and the leading option was clear. It makes less sense when the entire leaderboard reshuffles every year.

Two Variables That Are Collapsing

The two criteria that dominate most enterprise AI purchasing decisions are capability (which model performs best on our tasks) and cost (which model fits our budget). Both are converging faster than most procurement cycles can account for.

Gartner’s March 2026 forecast projects that the cost of running inference on a trillion-parameter model will drop more than 90% by 2030 compared to 2025 levels. The price variable that procurement teams negotiate today is on a steep downward curve, which means any deal anchored primarily to unit cost is optimizing for something that will look very different within a few years.

But falling unit costs do not automatically mean falling total bills. Gartner’s same report flags that agentic AI models - the kind that handle multi-step tasks autonomously - require 5 to 30 times more tokens per task than a standard chatbot interaction.

That creates a dynamic most budgeting models do not account for: an organization that deploys agentic AI at scale may find its inference volume growing faster than unit costs decline.

On the capability side, model performance is converging across the major providers. The gaps that existed in 2023 between the top-tier and second-tier models have narrowed substantially. Harvard Business Review’s survey of 1,006 global senior executives found that the companies seeing the highest returns from AI were not the ones that picked the “best” model - they were the ones with “clarity on what kind of AI the organization is trying to achieve” before they selected anything.

The Governance Gap That Matters More Than the Model

McKinsey’s 2026 State of AI Trust survey, covering roughly 500 organizations, found that average AI governance maturity sits at 2.3 out of 5. Only about one-third of organizations scored 3 or above on governance readiness.

The most telling finding is the ownership gap. Organizations with clear ownership of AI decisions - a named person or team responsible for model selection, evaluation, and re-evaluation - scored 2.6 on the maturity scale. Those without clear ownership scored 1.8, nearly a full point lower, with access to the same models and the same market information.

McKinsey’s framing is worth noting: the question for CEOs is not “how do we add AI?” but “how do we want decisions to be made, work to flow, and humans to engage?” That reframe shifts the conversation from a vendor evaluation exercise to an operating model conversation, and the two require very different governance structures.

MIT’s Center for Information Systems Research published a framework for “minimum viable governance” in March 2026, designed specifically for the pace of generative AI adoption. Their central insight is that traditional governance structures cannot keep up with the speed at which new models, capabilities, and risk profiles emerge. The framework identifies five governance domains - principles, policies, people, processes, and platforms - and argues that organizations need lightweight, fast-cycling governance rather than comprehensive but slow review processes.

What a Decision Framework Actually Looks Like

The companies that will navigate the next reshuffle without scrambling are not the ones that picked the right model today. They are the ones that built a process for re-evaluating when the landscape shifts, and the landscape will keep shifting.

A functional AI model decision framework addresses four questions that most procurement processes skip entirely.

First, task specificity - not “which model is best?” but “best at what, specifically?” A model that leads on coding tasks may trail on document analysis or customer interaction. The agent governance gap showed that 81% of organizations plan to expand AI agents, but most have not mapped which tasks those agents will handle or what quality threshold is acceptable.

Second, switching cost awareness. If your architecture locks you into a single provider’s API format, context window, or fine-tuning approach, the cost of switching when a better option emerges may be higher than the cost of the model itself. The organizations that treat model selection as a one-time decision are the most exposed when the market reshuffles.

Third, ownership. Someone in the organization needs to own not just the initial model selection but the ongoing re-evaluation cycle. McKinsey’s data shows that this single factor - clear ownership - accounts for nearly a full point of governance maturity difference. Without it, model decisions drift into procurement autopilot, and last year’s choice becomes this year’s default without anyone asking whether it still makes sense.

Fourth, evaluation criteria beyond benchmarks. As Forrester has emphasized in its evaluations of foundation models, the selection process should use criteria well beyond benchmark scores - their framework applies 21 criteria across 10 providers, recommending “a balanced and longer-term outlook on how foundation models fit the needs of a complex enterprise.” Benchmark scores measure what a model can do in controlled conditions, not what it will do in your specific workflows with your specific data.

The Variable That Doesn’t Decay

Features converge, prices collapse, and market share reshuffles every year. The organizations that consistently make better AI model decisions are not the ones with better predictions about which provider will lead next quarter. They are the ones that built a decision process capable of absorbing change without starting from scratch each time.

The next reshuffle is already underway. The organizations that built a framework will adapt. The ones that renewed a subscription will start over.

Related: McKinsey Has More AI Agents Than Junior Consultants shows what it looks like when a firm applies structured decision-making to its own AI workforce transformation, redesigning roles rather than defaulting to the status quo.